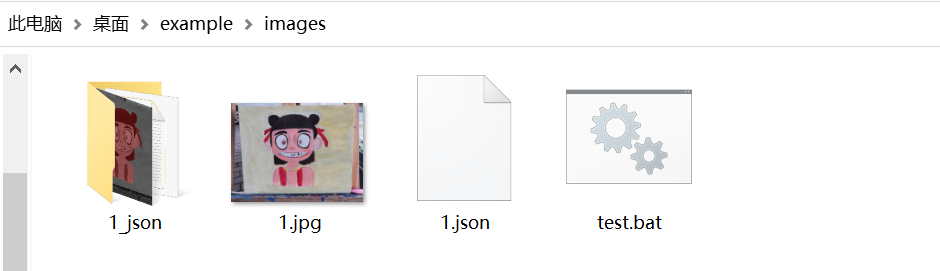

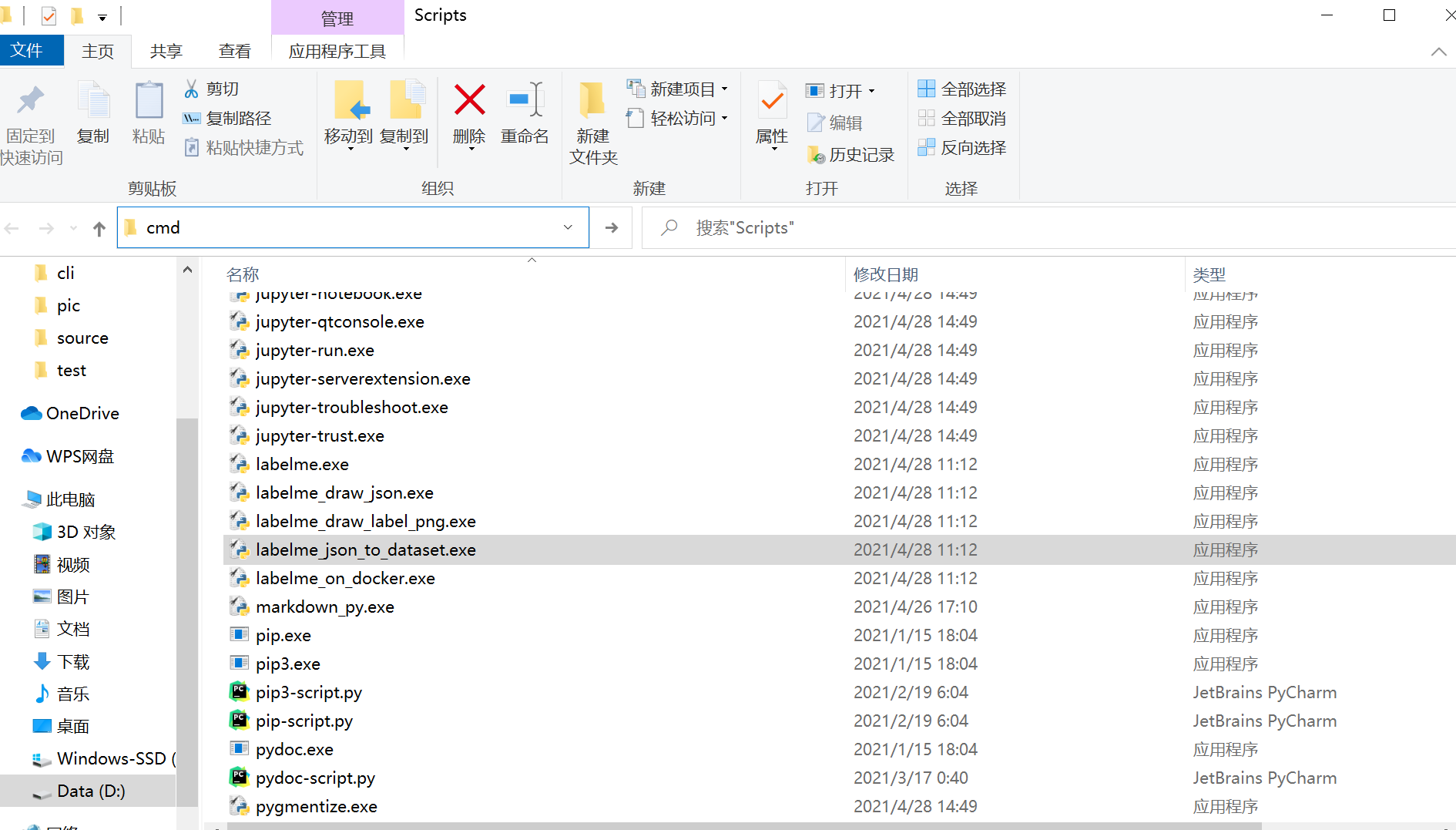

json, the program will assume it is a directory. Only one image can be annotated if a location is specified with. json, a single annotation will be written to this file. -output specifies the location that annotations will be written to.Labelme data_annotated/ -labels labels.txt # specify label list with a fileįor more advanced usage, please refer to the examples: Labelme data_annotated/ # Open directory to annotate all images in it labels highland_6539_self_stick_notes,mead_index_cards,kong_air_dog_squeakair_tennis_ball # specify label list # semantic segmentation example cd examples/semantic_segmentation Labelme apc2016_obj3.jpg -nodata # not include image data but relative image path in JSON file Labelme apc2016_obj3.jpg -O apc2016_obj3.json # close window after the save Labelme apc2016_obj3.jpg # specify image file Labelme # just open gui # tutorial (single image example) cd examples/tutorial You need install Anaconda, then run below: Pre-build binaries from the release section.Platform specific installation: Ubuntu, macOS, Windows.Platform agnostic installation: Anaconda.Exporting COCO-format dataset for instance segmentation.( semantic segmentation, instance segmentation) Exporting VOC-format dataset for semantic/instance segmentation.GUI customization (predefined labels / flags, auto-saving, label validation, etc).Image flag annotation for classification and cleaning.Image annotation for polygon, rectangle, circle, line and point.Various primitives (polygon, rectangle, circle, line, and point). Other examples (semantic segmentation, bbox detection, and classification). VOC dataset example of instance segmentation. It is written in Python and uses Qt for its graphical interface. If you have any questions or want to chat with me, feel free to contact me via EMAIL or social media.Labelme is a graphical image annotation tool inspired by. Plt.show() Figure 5: Prediction example Conclusion Plt.imshow(cv2.cvtColor(v.get_image(), cv2.COLOR_BGR2RGB)) V = v.draw_instance_predictions(outputs.to("cpu")) Instance_mode=ColorMode.IMAGE_BW # remove the colors of unsegmented pixels from import ColorModeĬfg.MODEL.WEIGHTS = os.path.join(cfg.OUTPUT_DIR, "model_final.pth")Ĭfg.MODEL.ROI_HEADS.SCORE_THRESH_TEST = 0.5Ĭfg.DATASETS.TEST = ("microcontroller_test", )ĭataset_dicts = get_microcontroller_dicts('Microcontroller Segmentation/test')įor d in random.sample(dataset_dicts, 3): This file can then be used to load the model and make predictions.įor inference, the DefaultPredictor class will be used instead of the DefaultTrainer. ain() Figure 4: Training Using the model for inferenceĪfter training, the model automatically gets saved into a pth file. Os.makedirs(cfg.OUTPUT_DIR, exist_ok=True) from detectron2.engine import DefaultTrainerĬfg.merge_from_file(model_zoo.get_config_file("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml"))Ĭfg.DATASETS.TRAIN = ("microcontroller_train",)Ĭfg.MODEL.WEIGHTS = model_zoo.get_checkpoint_url("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml") The only difference is that you'll need to use an instance segmentation model instead of an object detection model. Training the model works just the same as training an object detection model. The stuff segmentation format is identical and fully compatible with the object detection format. Instance segmentation falls under type three – stuff segmentation. Convert your data-set to COCO-formatĬOCO has five annotation types: object detection, keypoint detection, stuff segmentation, panoptic segmentation, and image captioning. Now that you have the labels, you could get started coding, but I decided to also show you how to convert your data-set to COCO format, which makes your life a lot easier. Doing this will allow you to get a reasonable estimate of how good your model really is later on. Figure 3: Labeling imagesĪfter you're done labeling the images, I'd recommend splitting the data into two folders – a training and a testing folder. Now you can click on "Open Dir", select the folder with the images inside, and start labeling your images. Labelme can be installed using pip: pip install labelmeĪfter installing Labelme, you can start it by typing labelme inside the command line. I chose labelme because of its simplicity to both install and use. For Image Segmentation / Instance Segmentation, there are multiple great annotation tools available, including VGG Image Annotation Tool, labelme, and PixelAnnotationTool. To label the data, you will need to use a labeling software.įor object detection, we used LabelImg, an excellent image annotation tool supporting both PascalVOC and Yolo format.

Figure 1: Examples of collected images Labeling dataĪfter gathering enough images, it's time to label them so your model knows what to learn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed